BIOS

(Bidirectional Input Output System)

©

2002-2003

by BIOS team

Background

In the course of history there have been many attempts to understand the way images are processed until finally they appear as ”impressions” in the mind. Due to the lack of sufficiently sensitive sensors (and maybe also for pragmatic reasons), one of the first experiments involved showing a certain picture to a macaque monkey and subsequently shock-freezing the animal. After that, its brain was removed in what was hoped to be the state ”identical” with the moment of discrete perception. Because the monkey was previously injected with a radioactive fluid, it was possible to produce an X-ray exposure showing a distorted version of the picture found in an area at the rear of the brain. Other perception-capture experiments were made by attaching invasive electrodes directly to the retina of a cat’s eyes. The result was a very noisy black-and-white video; the resolution was very poor, but the origin remained clearly recognizable.

These are just two

examples of the ”normal” methods still

used by neurologists. We therefore did not want our apparatus to have

the clean, highly polished appearance that commercial companies would

prefer for their images. Instead, the visitor ought to receive some sensation

of how one of the countless test monkeys might have felt before perishing

in the course of the scientific quest to create the theoretical basis

for our machine. However, we did not build our apparatus solely in order

to protest against such experiments. It also has a deep connection to

the old philosophical problem of reality, or more precisely, of distinguishing

between what is ”real” and what is ”hallucination.”

These are just two

examples of the ”normal” methods still

used by neurologists. We therefore did not want our apparatus to have

the clean, highly polished appearance that commercial companies would

prefer for their images. Instead, the visitor ought to receive some sensation

of how one of the countless test monkeys might have felt before perishing

in the course of the scientific quest to create the theoretical basis

for our machine. However, we did not build our apparatus solely in order

to protest against such experiments. It also has a deep connection to

the old philosophical problem of reality, or more precisely, of distinguishing

between what is ”real” and what is ”hallucination.”

Because the transformation of light energy into the language of the brain takes time, our seeing is always delayed. The visual and aural impulses emitted by a nearby object reach our brain at different times. Thus, due to the divergent temporal performance of our sensory organs, events that are objectively simultaneous are subjectively dislocated in time. Although these differences are very slight in terms of human perception, use of the term "simultaneous" is justified neither in the physiological nor quantum-mechanical sense. Everything that one sees, hears, smells and feels occurred prior to our perception of it. In other words: We "scan" reality in similar fashion to the frames of a film. A feeling of time is generated by the clocking of this scanning, by the density of registering modifications. It is very evident how dependent perception is on the biological condition of the perceiving creature. A cold lizard perceives time more slowly than does a warm one. In stressful situations, time seems to pass faster than when we are enjoying a peaceful rest.

Besides the above problems, one runs into real, and not purely linguistic, difficulties when trying to decide if it would be possible to distinguish between a simulation of all senses (by some kind of machine not yet invented) and the ”real” world. At the moment, we are remote from building such a machine, but we imagine that at least some of our readers have had dreams in which they touched, heard, saw or even smelled things as vividly if they were awake.

One possibility of gaining a sense of what perceptional interpretation means would be to amplify it. In other words: If we found a way of tapping the perceived signal after its transformation, then it could be ”fed back” in order to be transformed again, and this re-transformation would be repeated an undefined number of times and amplified in the process. Basically, that is what BIOS does.

The Apparatus

An exterior audiovisual perception path forming a closed loop with the natural path is generated. The viewer is invited to immerse himself in his own (physiological) perception.

The attached electrodes

transfer to an electroencephalograph (EEG) signals from the three sensory

areas of the brain cortex, namely the visual cortex

V1 along with the two auditive cortices. A custom-built interface translates

the voltage curves of the EEG into binary data. Thus it is possible to

algorithmically ”re-translate” this information into audiovisual

signals. As output media, the HMD, and the headphones represent the electromechanical

part of the cycle. The output continuously runs (back) into the biological

perception apparatus, resulting in the development of feedback.

The attached electrodes

transfer to an electroencephalograph (EEG) signals from the three sensory

areas of the brain cortex, namely the visual cortex

V1 along with the two auditive cortices. A custom-built interface translates

the voltage curves of the EEG into binary data. Thus it is possible to

algorithmically ”re-translate” this information into audiovisual

signals. As output media, the HMD, and the headphones represent the electromechanical

part of the cycle. The output continuously runs (back) into the biological

perception apparatus, resulting in the development of feedback.

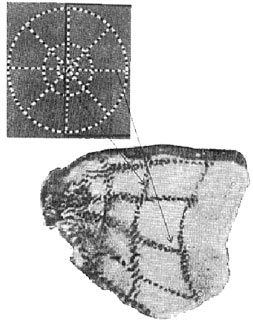

The neurological term ”Retinotopie” describes the pattern of projection, the way the X/Y position of one point in the visual field is represented on the surface of the first visual cortex.

On the basis

of the X/Y position and frequency of an electrode, the computer calculates

the X/Y position and color of a pixel in the resulting

image. The time flow is represented by the Z (depth) value. Missing

intermediate

values are interpolated (synthesized). The accompanying image shows

the system of conversion (that is to say: basic principles).

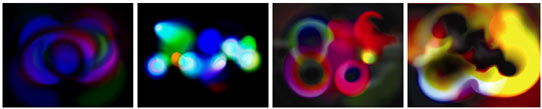

For BIOS

we

took the artistic liberty of visualizing ”output” as

subtly colored pulsations and waves of light. In the stereoscopic

HMD, these

trembling, breathing, flowing flares sometimes rouse visual associations

with fire, smoke and liquid. They also bear some resemblance to the

images seen if one presses the fingers against one's eyes.

The auditory cortex is similar, but somewhat more simple, in its pattern.

The cochlea forms a spiral structure behind the ear. Sounds are translated

to the biological cognitive system by the movement of finest villi which

emit neuronal impulses. Each villus has a nerve connection leading to

the acoustic cortex, which is again spiral in form. If we imagine the

cochlea to be placed directly on top of the auditory cortex, then the

connections would be parallel. Three signals are received, corresponding

to basses, middles and trebles. The conversion corresponds to a Fourier

(algorithmic) analysis, which is interpolated to the original width and

re-synthesized afterwards.

The above description conveys the current state of the project (2002/2003). Enhanced versions of BIOS involving more advanced hard- and software for audiovisual cortex-pattern recognition and set-up (improved for visitors) are in development. We are currently looking for further financial and technical support, so if you would like to promote this project, see the extended proposal (PDF) and/or contact us.